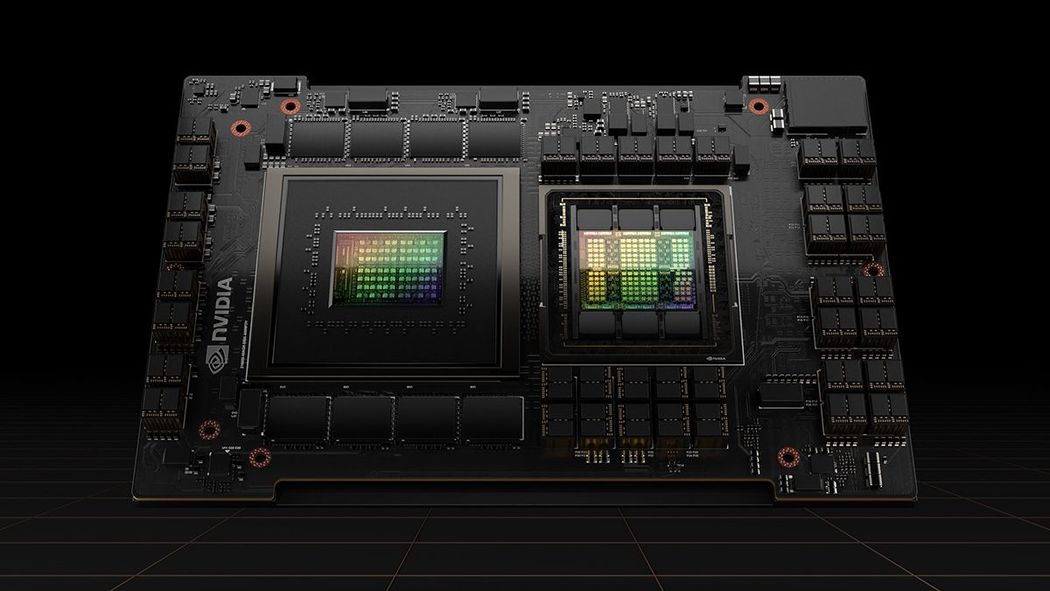

AI models continue to demand computational horsepower at an astounding rate. With generative AI going mainstream and more and more models moving from concept (training) to production (inference), this trend will only increase. To meet this rapidly growing demand, Paperspace is launching support for the newly introduced NVIDIA H100 Tensor Core GPU, powered by the NVIDIA Hopper architecture, which delivers the next massive leap in accelerated computing. The NVIDIA H100 GPU delivers unprecedented performance, scalability, and security across AI and HPC workloads.

"With the momentum behind compute-intensive generative AI and large language models, we're seeing a dramatic increase in the performance requirements from our customers. The NVIDIA H100 GPU is the response to this surging demand: A massive leap forward in terms of training and inference throughput that is needed to power this next generation of AI applications." - Daniel Kobran, Chief Operating Officer

“As Paperspace — an Elite member of the NVIDIA Cloud Service Provider Partner Program — launches support for the new NVIDIA H100 GPU, developers building and scaling their AI applications on Paperspace will now have access to unprecedented performance through the world's most powerful GPU for AI.” - Dave Salvator, Director of Accelerated Computing, NVIDIA.

The NVIDIA H100 GPU offers built-in support for Transformers and is heavily optimized for developing, training, and deploying generative AI, large language models (LLMs), and other popular model architectures. The H100’s Transformer Engine offers support for FP8 precision and is up to 30x faster for AI inference on LLMs versus the prior-generation NVIDIA A100 Tensor Core GPU.

The addition of the NVIDIA H100 GPUs on Paperspace represents a significant step forward in our commitment to providing our customers with hardware that can support the most powerful state-of-the-art models. We're excited to see what our users will build with the NVIDIA H100 and the impact it will have on the broader AI ecosystem.

This new offering is in Early Access. Register now to join the waitlist:

If you have product or pricing questions or if you're interested in learning more about how our Professional Services team can assist you with your AI projects, please submit a request.